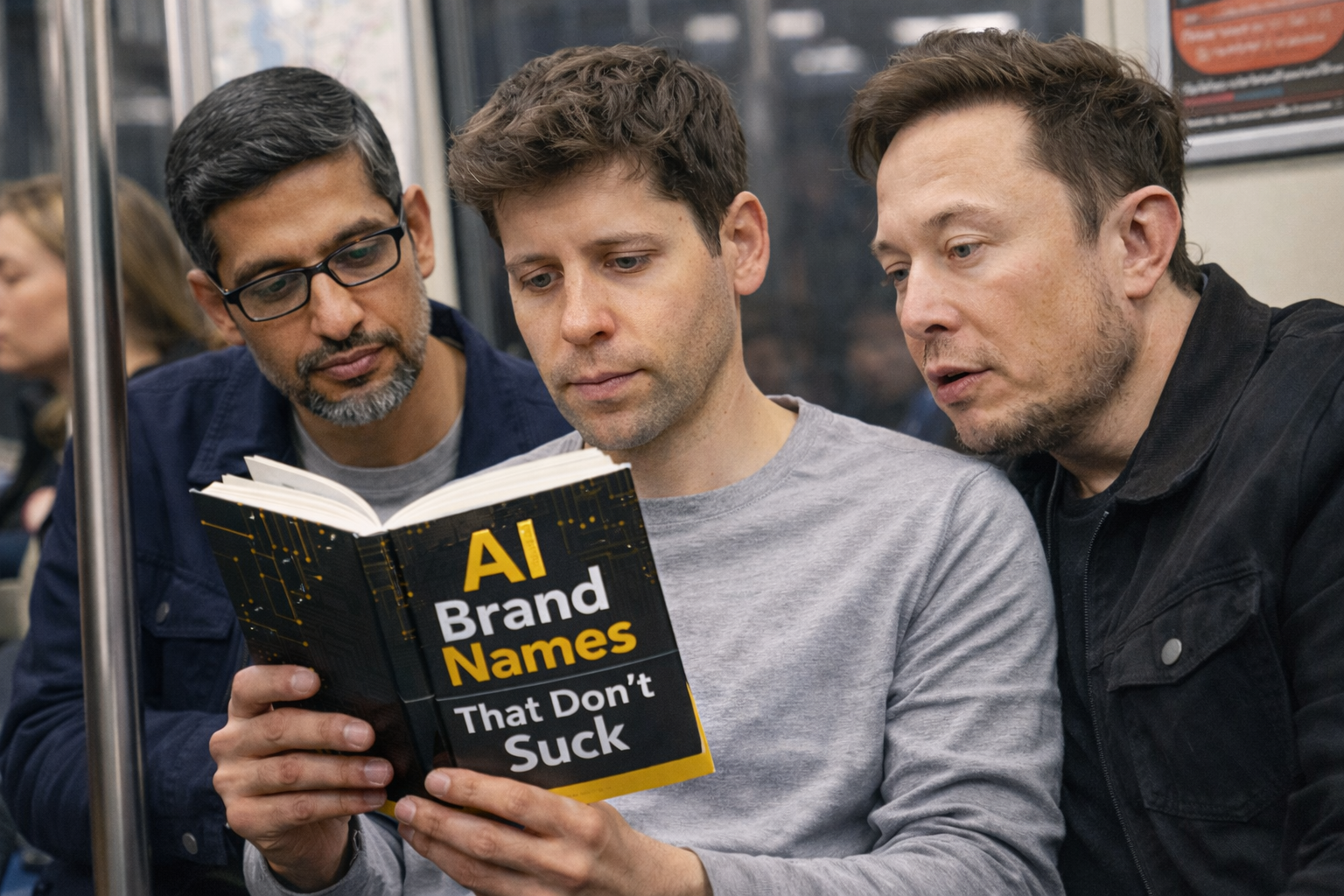

‘AI Brand Names That Don’t Suck’ – Book Marketing With Gemini and ChatGPT

The ‘reply guy’ in me told me that public perception of whether the Gemini name ‘sucks’ or ChatGPT sounds funny is not precisely what keeps Sundar Pichai or Sam Altman up at night right now. Then I thought it was a good chance to showcase Google’s Nano Banana Pro and OpenAI’s GPT Image 1.5, the latest image generation models from the AI giants, and their remarkable, highly improved capabilities to:

– Generate highly realistic images of public figures doing mundane tasks in mundane settings.

– Produce high-quality marketing copy for virtually any conceivable junk.